Nubus - ox-connector - Groups with special character “&” can’t be synced to Open-Xchange

Problem

Created groups with the group name contains the special character &, the group cannot be synchronized by the ox-connector to Open-Xchange.

This causes the ox-connector consumer to stop processing further messages, which leads to a broken synchronization state in the environment.

The issue occurs because the OX SOAP API rejects illegal characters during object creation.

Symptoms

- Groups with

&in the name are not created in Open-Xchange. - OX Connector stops processing NATS messages.

- Error messages appear in the OX Connector pod logs.

Logs from ox-connector Pod:

| 2026-02-25 11:04:52,617 INFO [consumer.handle_message:245] Received message with topic: groups/group, sequence_number: 52, num_delivered: 1 │

│ 2026-02-25 11:04:52,618 INFO [__init__.get_group_objs:175] Group OX Test & Group will be OX Group │

│ 2026-02-25 11:04:52,618 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.01,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:04:52,618 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:04:52,618 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.02,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:04:52,618 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:04:52,619 INFO [__init__.get_group_objs:206] Object('groups/group', 'cn=ox test & group,cn=groups,dc=swp-ldap,dc=internal') will be processed with context 1 │

│ 2026-02-25 11:04:52,619 INFO [groups.create_group:104] Creating Object('groups/group', 'cn=ox test & group,cn=groups,dc=swp-ldap,dc=internal') │

│ 2026-02-25 11:04:52,949 INFO [groups.update_group:69] Retrieving members... │

│ 2026-02-25 11:04:52,950 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.01,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:04:52,950 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:04:52,950 INFO [groups.update_group:74] ... found 3 │

│ 2026-02-25 11:04:52,950 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.02,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:04:52,950 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:04:52,950 INFO [groups.update_group:74] ... found 4 │

│ 2026-02-25 11:04:52,984 ERROR [consumer.create:343] Failed to handle creation │

│ Traceback (most recent call last): │

│ File "/consumer.py", line 318, in create │

│ run(obj) │

│ File "/usr/local/lib/python3.11/dist-packages/univention/ox/provisioning/__init__.py", line 121, in run │

│ create_group(new_obj) │

│ File "/usr/local/lib/python3.11/dist-packages/univention/ox/provisioning/groups.py", line 123, in create_group │

│ group.create() │

│ File "/usr/local/lib/python3.11/dist-packages/univention/ox/soap/backend.py", line 224, in create │

│ new_obj = self.service(self.context_id).create(obj) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/univention/ox/soap/services.py", line 655, in create │

│ return self._call_ox('create', grp=grp) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/univention/ox/soap/services.py", line 203, in _call_ox │

│ return getattr(service, func)(**kwargs) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/zeep/proxy.py", line 46, in __call__ │

│ return self._proxy._binding.send( │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/zeep/wsdl/bindings/soap.py", line 135, in send │

│ return self.process_reply(client, operation_obj, response) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/zeep/wsdl/bindings/soap.py", line 229, in process_reply │

│ return self.process_error(doc, operation)

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/dist-packages/zeep/wsdl/bindings/soap.py", line 329, in process_error │

│ raise Fault( │

│ zeep.exceptions.Fault: Illegal chars: "&"; exceptionId 192484105-4

Root cause exception from OX SOAP backend:

zeep.exceptions.Fault: Illegal chars: "&"

Root Cause

This problem is a limitation of the Open-Xchange SOAP provisioning API.

Reference:

https://forge.univention.org/bugzilla/show_bug.cgi?id=59077

The OX backend does not allow special characters such as & in group names during SOAP object creation. When such a message is processed:

- The group object is received via NATS provisioning stream.

- The OX Connector tries to create the group via SOAP.

- The SOAP call fails due to invalid character validation.

- The message remains in the NATS stream and blocks further processing.

Investigation

Check LDAP Group Exists

kubectl exec -n $NAMESPACE ums-ldap-server-primary-0 -- ldapsearch -x \

-D "$(kubectl get -n $NAMESPACE configmaps ums-ldap-server-primary -o json | jq -r '.data.ADMIN_DN')" \

-w "$(kubectl get -n $NAMESPACE secrets ums-ldap-server-admin -o json | jq -r '.data.password' | base64 -d)" \

-b "$(kubectl get -n $NAMESPACE configmaps ums-ldap-server-primary -o json | jq -r '.data.LDAP_BASEDN')" \

cn="OX Test & Group"

Result confirms LDAP object exists:

Defaulted container "main" out of: main, leader-elector, univention-compatibility (init), load-internal-plugins (init), load-ox-extension (init), load-opendesk-extension (init), load-portal-extension (init), load-opendesk-a2g-mapper-extension (init), wait-for-saml-metadata (init)

# extended LDIF

#

# LDAPv3

# base <dc=swp-ldap,dc=internal> with scope subtree

# filter: cn=OX Test & Group

# requesting: ALL

#

# OX Test & Group, groups, swp-ldap.internal

dn: cn=OX Test & Group,cn=groups,dc=swp-ldap,dc=internal

cn: OX Test & Group

gidNumber: 5024

sambaGroupType: 2

univentionGroupType: -2147483646

univentionObjectIdentifier: 351ac6fd-0a25-45bb-9e1f-843d31e388a7

isOxGroup: OK

oxContextIDNum: 1

opendeskFileshareEnabled: TRUE

opendeskProjectmanagementEnabled: TRUE

opendeskKnowledgemanagementEnabled: TRUE

opendeskLivecollaborationEnabled: TRUE

sambaSID: S-1-5-21-UNSET-11049

uniqueMember: uid=test.ox.02,cn=users,dc=swp-ldap,dc=internal

uniqueMember: uid=test.ox.01,cn=users,dc=swp-ldap,dc=internal

memberUid: test.ox.02

memberUid: test.ox.01

objectClass: opendeskKnowledgemanagementGroup

objectClass: univentionObject

objectClass: univentionGroup

objectClass: sambaGroupMapping

objectClass: posixGroup

objectClass: top

objectClass: opendeskFileshareGroup

objectClass: opendeskLivecollaborationGroup

objectClass: opendeskProjectmanagementGroup

objectClass: oxGroup

univentionObjectType: groups/group

Workaround

Step 1: Rename the group name with “and” or remove the group

The simplest and recommended solution is to modify the group name through UMC:

- Open UMC.

- Edit the affected group.

- Replace the character

&from the group name withandor remove the character. - Save the changes.

Example:

OX Test & Group → OX Test and Group

or

OX Test & Group → OX Test Group

This prevents SOAP provisioning failures during synchronization.

Step 2: Remove Stuck Messages from NATS Stream (Recovery)

If the OX Connector is already stuck and not processing messages, perform a NATS message cleanup.

2.1 Enable NATS Debug Container

Activate the NATS debug container via Helm values like descriped in our docs:

Provisioning Service

nubusProvisioning.nats.natsBox.enabled: true

Hint:

The natsBox debug container of the bundled NATS isn’t deployed by default. To explicitly activate the debug container, set nubusProvisioning.nats.natsBox.enabled to true.

Example modification:

cat helmfile/environments/dev/values.yaml.gotmpl | yq ".nubusProvisioning.nats.natsBox.enabled=true" > helmfile/environments/dev/test.values.yamlcat helmfile/environments/dev/test.values.yaml

Verify configuration:

cat helmfile/environments/dev/test.values.yaml

Example output:

replicas:

umsPortalServer: 2

certificate:

issuerRef:

name: "letsencrypt-prod-http"

jitsi:

enabled: false

nubusProvisioning:

nats:

natsBox:

enabled: true

2.2 Re-deploy Environment

Apply Helm configuration changes:

helmfile apply -e dev -n ${NAMESPACE}

Step 3: Access NATS Debug Shell

From your local client, you could get access to the NATS Debug Shell, like descriped in our docs:

https://docs.software-univention.de/nubus-kubernetes-operation/latest/en/troubleshooting-nats.html#prerequisites

Retrieve credentials:

NATS_USER=admin

NATS_PASSWORD=$(kubectl get secrets \

-n ${NAMESPACE} \

ums-provisioning-nats-admin \

-o"jsonpath={.data.password}" | base64 -d)

Start debug container:

kubectl debug \

-n ${NAMESPACE} \

-it ums-provisioning-nats-0 \

--env NATS_USER="${NATS_USER}" \

--env NATS_PASSWORD="${NATS_PASSWORD}" \

--image=docker.io/natsio/nats-box:0.18.1-nonroot \

-- /bin/sh

Create NATS context:

nats context add nubus \

--server nats://127.0.0.1:4222 \

--user "${NATS_USER}" \

--password "${NATS_PASSWORD}"

Output:

NATS Configuration Context "nubus"

Server URLs: nats://127.0.0.1:4222

Username: admin

Password: *****************************************

Path: /nsc/.config/nats/context/nubus.json

WARNING: Shell environment overrides in place using NATS_USER, NATS_PASSWORD

Step 4: Identify Problematic Stream Message Sequence

List streams:

nats stream ls

~ $ nats stream ls

╭─────────────────────────────────────────────────────────────────────────────────────────────────╮

│ Streams │

├─────────────────────────┬─────────────┬─────────────────────┬──────────┬─────────┬──────────────┤

│ Name │ Description │ Created │ Messages │ Size │ Last Message │

├─────────────────────────┼─────────────┼─────────────────────┼──────────┼─────────┼──────────────┤

│ stream:incoming │ │ 2026-02-24 19:30:01 │ 0 │ 0 B │ 12m10s │

│ stream:ldap-producer │ │ 2026-02-24 19:32:04 │ 0 │ 0 B │ 12m10s │

│ stream:portal-consumer │ │ 2026-02-24 19:40:34 │ 0 │ 0 B │ 12m10s │

│ stream:prefill │ │ 2026-02-24 19:30:03 │ 0 │ 0 B │ 15h47m23s │

│ stream:prefill-failures │ │ 2026-02-24 19:32:05 │ 0 │ 0 B │ never │

│ stream:selfservice │ │ 2026-02-24 19:40:35 │ 0 │ 0 B │ 2h48m58s │

│ stream:ox-connector │ │ 2026-02-24 19:40:33 │ 2 │ 2.8 KiB │ 12m10s │

╰─────────────────────────┴─────────────┴─────────────────────┴──────────┴─────────┴──────────────╯

Relevant stream:

stream:ox-connector

Check stream info. You will find the needed sequence number at the end of the output:

nats stream info stream:ox-connector

~ $ nats stream info stream:ox-connector

Information for Stream stream:ox-connector created 2026-02-24 19:40:33

Subjects: ox-connector.main, ox-connector.prefill

Replicas: 1

Storage: File

Options:

Retention: Limits

Acknowledgments: true

Discard Policy: Old

Duplicate Window: 2m0s

Allows Batch Publish: false

Allows Counters: false

Allows Msg Delete: true

Allows Per-Message TTL: false

Allows Purge: true

Allows Rollups: false

Limits:

Maximum Messages: unlimited

Maximum Per Subject: unlimited

Maximum Bytes: unlimited

Maximum Age: unlimited

Maximum Message Size: unlimited

Maximum Consumers: unlimited

State:

Host Version: 2.11.9

Required API Level: 0 hosted at level 1

Messages: 2

Bytes: 2.8 KiB

First Sequence: 52 @ 2026-02-25 11:04:52

Last Sequence: 53 @ 2026-02-25 11:15:48

Active Consumers: 1

Number of Subjects: 1

Locate the message sequence number:

First Sequence: 52 @ 2026-02-25 11:04:52

Last Sequence: 53 @ 2026-02-25 11:15:48

Hint:

Which sequence is the cause of the problem can be determined based on the order. The first entry should be the root cause. Additionally, the OX Connector logs can be used, as they also clearly show which sequence number is required.

INFO [consumer.handle_message:245] Received message with topic: groups/group, sequence_number: 52, num_delivered: 1

<skip>

Step 5: Remove Only the Problematic Message (IMPORTANT)

Do not remove the entire stream with

rm

Usermm(stream rmm - Securely removes an individual message from a Stream) instead ofrm.

nats stream rmm stream:ox-connector

~ $ nats stream rmm stream:ox-connector

? Message Sequence to remove 52

? Really remove message 52 from Stream stream:ox-connector Yes

Step 6: Restart the OX Connector Pod to resume processing:

In k9s you could just kill the pod will restart and continue consuming messages.

<ctrl-k> Kill

Results

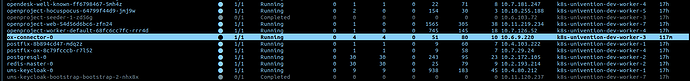

The pod is running again healthy.

The sequence number 52 is deleted.

~ $ nats stream info stream:ox-connector

Information for Stream stream:ox-connector created 2026-02-24 19:40:33

Subjects: ox-connector.main, ox-connector.prefill

Replicas: 1

Storage: File

Options:

Retention: Limits

Acknowledgments: true

Discard Policy: Old

Duplicate Window: 2m0s

Allows Batch Publish: false

Allows Counters: false

Allows Msg Delete: true

Allows Per-Message TTL: false

Allows Purge: true

Allows Rollups: false

Limits:

Maximum Messages: unlimited

Maximum Per Subject: unlimited

Maximum Bytes: unlimited

Maximum Age: unlimited

Maximum Message Size: unlimited

Maximum Consumers: unlimited

State:

Host Version: 2.11.9

Required API Level: 0 hosted at level 1

Messages: 1

Bytes: 1.4 KiB

First Sequence: 53 @ 2026-02-25 11:15:48

Last Sequence: 53 @ 2026-02-25 11:15:48

Active Consumers: 1

Number of Subjects: 1

Successful Processing Logs

/entrypoint.sh: /entrypoint.d/ is not empty, will attempt to perform configuration │

│ /entrypoint.sh: Looking for shell scripts in /entrypoint.d/ │

│ /entrypoint.sh: Launching /entrypoint.d/75-entrypoint.sh │

│ /entrypoint.sh: Configuration complete; ready for start up │

│ 2026-02-25 11:30:42,769 INFO [consumer.main:462] Using 'nubus-provisioning-consumer' library version '0.64.0'. │

│ 2026-02-25 11:30:42,769 INFO [consumer.start_listening_for_changes:219] Listening for changes in topics: {'oxmail/accessprofile', 'oxmail/functional_account', 'oxmail/oxcontext', 'groups/group', 'oxresource │

│ 2026-02-25 11:30:43,216 INFO [consumer.handle_message:245] Received message with topic: groups/group, sequence_number: 53, num_delivered: 1 │

│ 2026-02-25 11:30:43,217 INFO [__init__.get_group_objs:170] Group OX Test & Group was OX Group │

│ 2026-02-25 11:30:43,217 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.01,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:30:43,217 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:30:43,217 INFO [consumer._get_existing_object_ox_id:138] Searching for uid=test.ox.02,cn=users,dc=swp-ldap,dc=internal! │

│ 2026-02-25 11:30:43,217 INFO [consumer._get_existing_object_ox_id:185] Loading object OX ID from known objects │

│ 2026-02-25 11:30:43,217 INFO [__init__.get_group_objs:206] Object('groups/group', 'cn=ox test & group,cn=groups,dc=swp-ldap,dc=internal') will be processed with context 1 │

│ 2026-02-25 11:30:43,217 INFO [groups.delete_group:163] Deleting Object('groups/group', 'cn=ox test & group,cn=groups,dc=swp-ldap,dc=internal') │

│ 2026-02-25 11:30:43,632 INFO [groups.delete_group:166] Object('groups/group', 'cn=ox test & group,cn=groups,dc=swp-ldap,dc=internal') does not exist. Doing nothing... │

│ 2026-02-25 11:30:43,632 INFO [consumer.remove:442] Removing object OX ID from known objects │

│ 2026-02-25 11:30:43,632 INFO [consumer.remove:444] Removing object OX DB ID from known objects │

│ 2026-02-25 11:30:43,632 INFO [consumer.remove:446] Removing object username from known objects │

│ 2026-02-25 11:30:43,632 INFO [consumer.remove:448] Removing object from non ox objects │

│ 2026-02-25 11:30:43,632 INFO [consumer.handle_message:296] Committing database entries │

│ 2026-02-25 11:30:43,646 INFO [api.acknowledge_message_with_retries:155] Message 53 was acknowledged.

Important Notes

- Always verify sequence numbers before removing messages.

- Use

rmminstead ofrmto avoid deleting the full stream. - Deployments may need to be restarted after configuration changes.

Conclusion

This issue is caused by invalid character validation in the Open-Xchange SOAP provisioning API.

Removing special characters from group names or cleaning failed NATS messages resolves the synchronization blockage.